Gollem: How We Build Production LLM Agents in Go

Gollem is an open-source Go framework for agentic AI applications. It gives you a unified interface to OpenAI, Claude, and Gemini, a tool execution pipeline with middleware for policy enforcement, pluggable behavioral strategies (ReAct, Plan&Execute, Reflexion), portable session history, and MCP integration - all in one package designed for production workloads. At Webdelo, we evaluated gollem when building AI agent infrastructure for B2B clients, and this article shares what we found.

Gartner predicts that 40% of enterprise applications will include task-specific AI agents by 2026, up from under 5% in 2025. The same research notes that over 40% of agentic AI projects will be canceled by 2027 - not because the models are bad, but because teams underestimated governance, security, and operational complexity.

That is the exact problem gollem is designed for. Not "how to call an LLM," but "how to run an LLM agent reliably in production, with auditing, loop limits, testable behavior, and security policies around tool calls." This article walks through how we use it and what makes it worth considering for your next AI project.

Why We Use Go for LLM Agent Services

Go gives us three things that matter for production agent services: static typing that catches LLM response schema mismatches at compile time, goroutines for true parallel tool execution without GIL constraints, and single-binary deployment that eliminates runtime dependency headaches. Python works well for prototypes. For services handling thousands of concurrent agent sessions, it introduces operational complexity that adds up fast.

The GIL (Global Interpreter Lock) in CPython means Python threads cannot truly run in parallel. When an agent needs to call a database, query an external API, and search a vector store simultaneously - all common in real workflows - Python's asyncio adds complexity while Go goroutines handle this naturally and cheaply. This is a core advantage for teams focused on professional web development of high-performance services. Assembled's engineering team confirmed this in practice, serving millions of monthly LLM requests on Go with minimal performance tuning.

Deployment is the other argument. A Go agent compiles to one binary. You copy it to a container and run it. No virtual environments, no requirements.txt version conflicts, no runtime surprises when the image builds on CI but behaves differently on the server. For teams maintaining dozens of microservices, this simplicity is real money.

Structured Outputs with Go Types

When an LLM returns structured data, Go's type system lets you validate it at compile time rather than catching schema errors at 3 AM in production. Gollem generates JSON schemas automatically from struct tags.

// JSON Schema is generated from Go struct tags automatically

type AnalysisResult struct {

Summary string `json:"summary" description:"Brief summary" required:"true"`

Severity string `json:"severity" description:"Risk level" enum:"low,medium,high,critical"`

Score int `json:"score" description:"Confidence 0-100" minimum:"0" maximum:"100"`

Tags []string `json:"tags" description:"Relevant classification tags"`

}The LLM receives this schema and must produce output matching it. Enum violations and out-of-range values surface at parse time, not silently in production data. This is one of the more underrated advantages of building agent infrastructure in Go - the type system does work that would otherwise require runtime validation code.

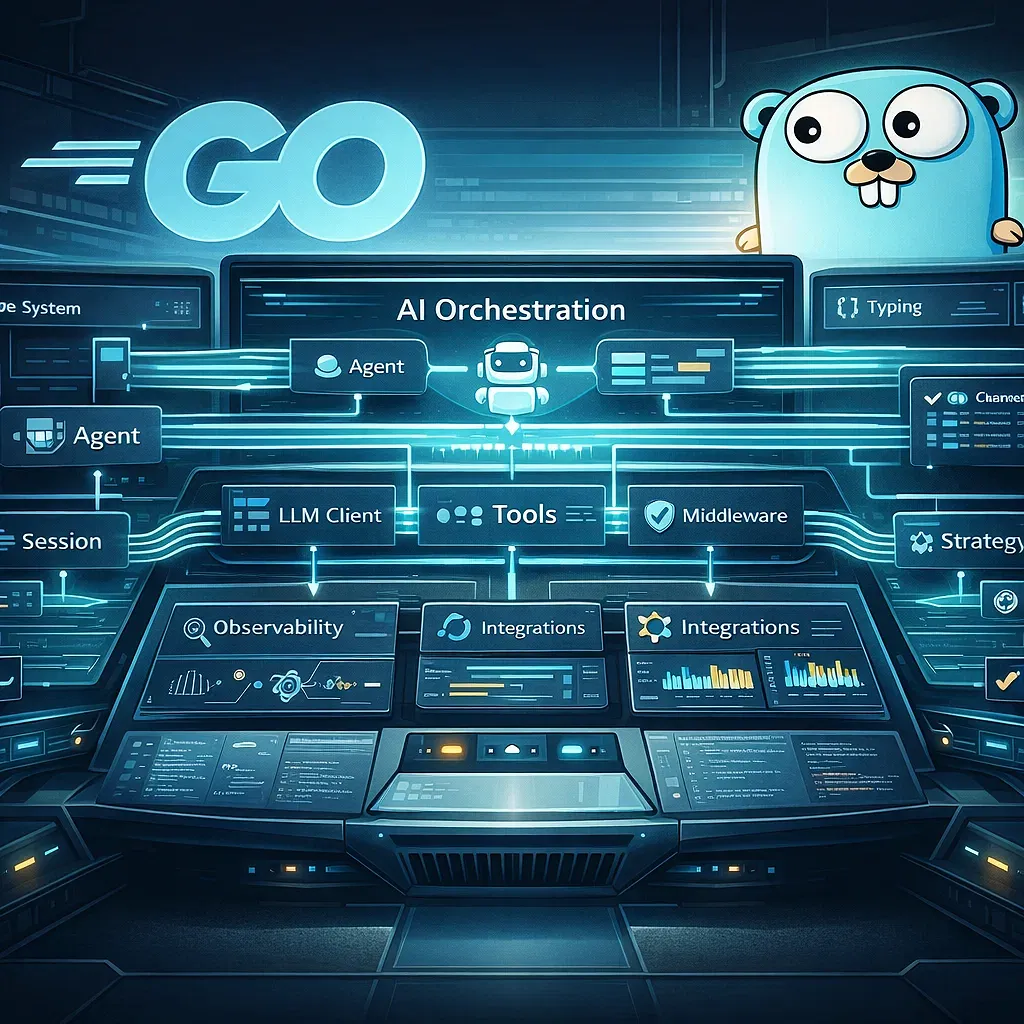

How Gollem Is Structured

Gollem is built around five components that each handle one responsibility: a unified LLM client, a session and history layer, a tool execution pipeline, composable middleware, and pluggable behavioral strategies. They fit together through well-defined interfaces, which is what makes the whole thing testable and maintainable.

Agent

+- LLM Client (OpenAI / Claude / Gemini)

+- Session

| +- History (portable, serializable, provider-convertible)

+- Tools

| +- Custom Tools (ToolSpec + Run)

| +- MCP ToolSets (Stdio / StreamableHTTP)

+- Middleware Pipeline

| +- Compacter (token limit handling)

| +- Tool Middleware (policy enforcement, audit)

| +- Custom Middleware

+- Strategy

+- Simple (default loop)

+- ReAct

+- Plan&Execute

+- ReflexionThe part we found most valuable in production is the History layer. Conversations are serializable and portable across providers. You can store a session in Redis, have any worker pick it up, convert it from OpenAI format to Claude format if you switch providers, and continue where you left off. This enables stateless worker architectures - no sticky sessions, no shared memory, clean horizontal scaling. For distributed systems with queues and workers, this matters more than most framework features.

The Compacter middleware solves token limits automatically. When a conversation grows past the model's context window, the compacter summarizes the oldest 70% of messages using the LLM itself, then retries. Instead of crashing, the agent preserves the semantic thread of the conversation. We have never had to think about this problem in production since adding it to our stack.

Loop protection is built in. The default limit is 128 iterations, configurable with WithLoopLimit. An agent caught in a reasoning loop burns tokens and blocks resources. Hard limits are a production requirement. A reliability standard that matters for any GEO and AI SEO services built on top of agent infrastructure.

Tool Execution and Policy Enforcement

Tools are the interface between your LLM agent and the real world. When an agent can query databases, send emails, modify records, or call external APIs, the security of tool execution is the security of your system. Gollem's approach here is what made us confident using it for client work. A layered security approach used in professional SEO site audit tools that analyze hundreds of endpoints mirrors these principles.

Each tool defines a typed ToolSpec and a Run method. The spec is declarative - parameter names, types, descriptions, constraints, and defaults are all explicit. The LLM receives the spec as JSON Schema and can only invoke tools within defined boundaries.

type SearchTicketsTool struct{}

func (t SearchTicketsTool) Spec() gollem.ToolSpec {

return gollem.ToolSpec{

Name: "search_tickets",

Description: "Search support tickets by query and optional status filter",

Parameters: map[string]*gollem.Parameter{

"query": gollem.String().WithDescription("Search query").Required(),

"status": gollem.String().WithDescription("Optional status: open|closed|pending"),

"limit": gollem.Integer().WithDescription("Max results").WithDefault(20),

},

}

}

func (t SearchTicketsTool) Run(ctx context.Context, args map[string]any) (map[string]any, error) {

// Real integration: DB, Elasticsearch, Jira, Zendesk

// Production: add timeouts, retries, circuit breakers here

return map[string]any{"tickets": results}, nil

}Defining tools is not enough. An agent that accepts untrusted input - user prompts, external documents, data from other systems - can be manipulated into calling tools it should not call, or with parameters it should not accept. This is not theoretical. It is the core attack surface of production agents. Gollem's answer is ToolMiddleware: a composable wrapper around every tool execution that lets you enforce policy at the framework level.

func AllowlistTools(allowed map[string]struct{}, logger *slog.Logger) gollem.ToolMiddleware {

return func(next gollem.ToolHandler) gollem.ToolHandler {

return func(ctx context.Context, req *gollem.ToolExecRequest) (*gollem.ToolExecResponse, error) {

name := req.Tool.Name

if _, ok := allowed[name]; !ok {

logger.Warn("tool blocked by policy", "tool", name)

return &gollem.ToolExecResponse{

Result: map[string]any{"error": "tool is not allowed by policy"},

}, nil

}

resp, err := next(ctx, req)

// Audit trail: who called what, how long, any errors

if resp != nil {

logger.Info("tool executed",

"tool", name,

"duration_ms", resp.Duration,

"err", resp.Error,

)

}

return resp, err

}

}

}This pattern is familiar to anyone who has written HTTP middleware in Go. You can stack allowlisting, rate limiting, RBAC, and audit logging into a pipeline where each layer does one thing. We apply this on every agent we build for clients - the policy enforcement point sits at the framework level, independent of agent logic. If the LLM is manipulated into requesting a tool not on the allowlist, it is blocked and logged before execution.

MCP Integration and Real Security Context

The Model Context Protocol (MCP) standardizes how agents connect to external tools and data sources. Gollem supports two transports: Stdio for local MCP servers and StreamableHTTP for remote ones. MCP tools load as a ToolSet and go through the same middleware pipeline as any other tool.

That uniformity matters because MCP has had real security problems. In 2025, critical vulnerabilities appeared in Anthropic's official Git MCP server, including remote code execution via prompt injection. Researchers found over 1,800 MCP servers exposed without authentication. An unofficial Postmark MCP server with 1,500 weekly downloads was modified to silently BCC all emails to an attacker. These are production incidents, not hypotheticals.

When we connect MCP servers in client projects, we treat them zero-trust: strict allowlists, audit logging on every call, isolated execution environments. Gollem's middleware layer makes this uniform regardless of whether the tool comes from a built-in implementation or an external MCP server.

Behavioral Strategies: Choosing the Right Execution Pattern

Gollem provides four built-in strategies: simple loop, ReAct, Plan&Execute, and Reflexion. Each is a different execution pattern suited to different types of tasks. The framework makes this choice explicit - you pick a strategy when creating the agent, and the behavior follows from that decision.

| Strategy | How it works | Best for | Trade-off |

|---|---|---|---|

| Simple loop | Direct prompt-tool-response cycle | Lookups, simple Q&A, single-step actions | Minimal overhead, limited reasoning depth |

| ReAct | Thought - Action - Observation cycle | Dynamic tasks where next step depends on prior result | More tokens, better handling of unknown paths |

| Plan&Execute | Full plan first, then sequential execution | Multi-step workflows, SRE runbooks, onboarding flows | Predictable, token-efficient with small planning model |

| Reflexion | ReAct + self-evaluation + memory | Code generation, iterative improvement tasks | Highest token cost, but self-correcting over cycles |

In practice, we use ReAct for most client-facing agents - it handles the "I need to check a few things before answering" pattern well without requiring a full upfront plan. Plan&Execute is our go-to for automation workflows where the client needs to see the plan before execution starts, which matters in regulated environments. Reflexion we use selectively - the self-evaluation loop produces better results for code-heavy tasks, but the token cost requires budgeting. The same applies to specialist platforms like dental clinic websites that use agent-driven scheduling.

// Simple loop (default)

agent, _ := gollem.New(ctx, llmClient, gollem.WithTools(myTools))

// ReAct strategy

agent, _ := gollem.New(ctx, llmClient,

gollem.WithTools(myTools),

gollem.WithStrategy(react.New()),

)

// Plan&Execute - planning model can differ from execution model

agent, _ := gollem.New(ctx, llmClient,

gollem.WithTools(myTools),

gollem.WithStrategy(planexec.New()),

)

// Loop limit - always set this in production

agent, _ := gollem.New(ctx, llmClient,

gollem.WithTools(myTools),

gollem.WithStrategy(react.New()),

gollem.WithLoopLimit(20),

)Strategies compose. Reflexion can run on top of ReAct for iterative improvement with adaptive reasoning. The Strategy interface is open, so you can implement custom execution patterns for domain-specific requirements. We have written a few of these for clients with unusual multi-step workflows that do not map cleanly to the built-in patterns. These patterns are equally valuable in digital marketing for business platforms that rely on conversational AI.

Observability and Testing in Production

Standard monitoring tools do not cover LLM agents. They track HTTP latency and error rates, but an agent that takes 45 seconds is not necessarily broken - it might be mid-reasoning through a complex Plan&Execute workflow. Without agent-specific observability, you cannot distinguish "working as expected" from "stuck in a loop burning tokens." Gollem's execution tracing captures the full reasoning chain: strategy applied, tools called, parameters passed, step durations, and what the agent decided next. Backends are pluggable - route to Datadog, Jaeger, or your own storage through a single interface.

The metrics we monitor for every production agent deployment:

- Token usage per request - broken down by reasoning step, not just total input/output

- Latency distribution - P50 and P99, including tool call latency separately from LLM latency

- Policy violations - tool calls blocked by allowlist or RBAC middleware

- Cost per execution - dollar cost by provider and model, essential for SLA budgeting. Essential for production search engine optimization workflows where agent actions directly impact site rankings.

- Loop terminations - how often agents hit the loop limit, which signals reasoning issues

Testing Agent Logic Without API Calls

Gollem provides mock implementations for LLMClient, Session, and Tool. This means you can write comprehensive tests for agent behavior without a single API call. A mock LLM client returns predetermined responses, so you can test how your agent handles an unexpected format, a failed tool call, or hitting the loop limit - without waiting for real API responses or paying per token.

Integration tests use environment variable guards and skip automatically when credentials are absent. This is standard engineering discipline, but it is worth calling out because many AI frameworks treat testing as an afterthought. When you can write a regression test for a specific agent behavior bug, you can ship updates to production with confidence.

This testability is one of the signals we look for when evaluating frameworks for client projects. It means the framework authors thought about production use from the start - not just "does it work" but "can you verify it keeps working after changes." A clean approach to professional web design and infrastructure that keeps production environments lean reflects these same principles.

A Complete Production Agent in Under 50 Lines

Here is a support ticket analysis agent that pulls together everything covered above: LLM client setup, tool registration, middleware stack, ReAct strategy, and loop limit. This is close to what we ship for client support automation workflows.

package main

import (

"context"

"log/slog"

"os"

"github.com/m-mizutani/gollem"

"github.com/m-mizutani/gollem/llm/openai"

"github.com/m-mizutani/gollem/strategy/react"

)

func main() {

ctx := context.Background()

logger := slog.New(slog.NewJSONHandler(os.Stdout, nil))

// 1. LLM client - swap openai for claude or gemini with no other changes

client, err := openai.New(ctx)

if err != nil {

logger.Error("failed to create LLM client", "err", err)

os.Exit(1)

}

// 2. Tools available to the agent

tools := []gollem.Tool{

SearchTicketsTool{},

GetCustomerHistoryTool{},

UpdateTicketStatusTool{},

}

// 3. Allowlist - agent can only call these three tools

allowed := map[string]struct{}{

"search_tickets": {},

"get_customer_history": {},

"update_ticket_status": {},

}

// 4. Assemble agent: middleware + strategy + loop limit

agent, err := gollem.New(ctx, client,

gollem.WithTools(tools),

gollem.WithToolMiddleware(AllowlistTools(allowed, logger)),

gollem.WithStrategy(react.New()),

gollem.WithLoopLimit(20),

)

if err != nil {

logger.Error("failed to create agent", "err", err)

os.Exit(1)

}

// 5. Run

result, err := agent.Prompt(ctx, "Analyze ticket #4521 and suggest a resolution")

if err != nil {

logger.Error("agent execution failed", "err", err)

os.Exit(1)

}

logger.Info("agent completed", "result", result.Content)

}The allowlist limits the agent to exactly three tools, regardless of what the prompt tries to get it to do. ReAct lets it search for the ticket, check the customer's history, and reason about both before suggesting a resolution. The loop limit of 20 means it will not run indefinitely if something goes wrong with the reasoning chain. This is the baseline configuration we use as a starting point for every new agent project. From e-commerce platforms to appliance repair service platforms that handle high volumes of customer requests, this pattern scales.

Deployment: One Binary, No Surprises

Gollem agents compile to a single binary with zero runtime dependencies. Our deployment pattern:

- Build:

go build -o agent-service ./cmd/agent- one binary, runs anywhere - Container: Distroless or scratch base image - binary plus TLS certificates, nothing else

- Configuration: Environment variables for LLM keys, tool endpoints, middleware settings

- Scaling: Horizontal Kubernetes replicas - stateless because session history loads from external storage (Redis or PostgreSQL)

Stateless design means any replica handles any request. Session history loads from the store, the agent executes, history saves back. No sticky sessions, no coordination between instances. This scales cleanly and simplifies incident response - you can kill any replica at any time without losing conversation state.

What We Conclude After Using Gollem in Production

Gollem's value is not in novelty - it is in having made the right engineering decisions: middleware for policy, portable history for stateless architecture, explicit behavioral strategies, mock interfaces for testing, and hard limits for loop protection. These are the things that make the difference between a demo that works and a service that you can monitor, audit, and trust at 2 AM.

The AI agents market is growing fast, and the 40% project cancellation rate Gartner cites is a direct result of teams shipping agents without the operational discipline to run them. The teams that win are the ones treating agents as service components - with SLAs, security policies, cost controls, and proper testing.

Key takeaways from our experience with gollem:

- Go's type system catches LLM response errors at compile time - fewer surprises in production than Python equivalents

- The ToolMiddleware pipeline gives you a policy enforcement point independent of agent logic - essential for zero-trust tool access

- Portable History enables stateless worker architectures - clean horizontal scaling without sticky sessions

- Four built-in strategies cover most production use cases; the open Strategy interface handles the rest

- Mock interfaces make agent behavior testable without API calls - regression tests are possible and worth writing

At Webdelo, we help companies integrate AI agent systems into B2B workflows - from support automation to internal knowledge retrieval to multi-step business process agents. If you are planning an agentic AI project and want to discuss the architecture before writing a line of code, we are happy to talk through the tradeoffs with you.

Frequently Asked Questions

What is Gollem and what problems does it solve?

Gollem is an open-source Go framework designed for production-grade LLM agent applications. It addresses the fundamental challenge of running LLM agents reliably in production environments with proper governance, security policies, and operational controls. While many frameworks focus on how to call an LLM, Gollem solves how to run LLM agents securely, auditably, and with predictable behavior at scale.

Why is Go a better choice for production LLM agents compared to Python?

Go provides three critical advantages for production agents: static typing that catches LLM response schema mismatches at compile time rather than in production, goroutines for true parallel tool execution without GIL constraints, and single-binary deployment that eliminates runtime dependency headaches. Python works well for prototypes, but for services handling thousands of concurrent agent sessions, Go's operational simplicity and performance characteristics prove superior in practice.

How does Gollem enforce tool execution policies and prevent unauthorized tool calls?

Gollem uses a composable ToolMiddleware pipeline that sits between the LLM and tool execution. Each middleware layer enforces policies like allowlisting, rate limiting, and RBAC before execution occurs. This policy enforcement point exists independently of agent logic, so even if the LLM is manipulated through prompt injection, disallowed tools are blocked and logged before execution. This zero-trust approach is essential for production agents handling untrusted inputs.

What are the four behavioral strategies available in Gollem and when should each be used?

Gollem provides four strategies: Simple loop for direct prompt-response cycles, ReAct for dynamic tasks requiring reasoning, Plan-and-Execute for multi-step workflows where clients need to see the plan first, and Reflexion for iterative improvement with self-evaluation. In practice, ReAct is most commonly used for client-facing agents. Plan-and-Execute is preferred for automation workflows in regulated environments. Reflexion produces superior results for code-heavy tasks but incurs higher token costs.

How does Gollem handle token limits in long conversations?

Gollem includes a Compacter middleware that automatically handles token limit errors. When a conversation exceeds the model's context window, the Compacter summarizes the oldest 70% of messages using the LLM itself, then retries the request. This preserves semantic continuity instead of crashing or truncating blindly. The default loop limit of 128 iterations also prevents runaway agents from burning tokens indefinitely.

Can Gollem integrate with MCP servers and external tools?

Yes, Gollem supports the Model Context Protocol (MCP) with two transport types: Stdio for local tool servers and StreamableHTTP for remote ones. MCP tools load as a ToolSet and pass through the same middleware pipeline as built-in tools. This means allowlisting, rate limiting, and audit logging apply uniformly to all tools, whether they are native, custom, or loaded from an MCP server.

How do you test LLM agents built with Gollem without making real API calls?

Gollem provides mock implementations for its core interfaces: LLMClient, Session, and Tool. You can write comprehensive unit tests by configuring mock LLM clients to return predetermined responses and simulating various scenarios like unexpected formats, tool failures, or loop limit breaches. Integration tests that require real API keys use environment variable guards and skip automatically when credentials are unavailable.

How does the History layer enable stateless agent architecture and horizontal scaling?

Gollem's History layer stores conversations in a serializable, provider-agnostic format that can be persisted to external storage like Redis or PostgreSQL. Any worker can retrieve a session, continue the conversation, and save it back regardless of which worker executed previous steps. This eliminates sticky sessions and shared state requirements, enabling clean horizontal scaling without coordination between instances. Workers can be scaled, replaced, or restarted without losing conversation state.

How does MCP integration in Gollem maintain security when connecting external tools and services?

Gollem treats MCP (Model Context Protocol) servers with zero-trust: all MCP tools go through the same ToolMiddleware pipeline as built-in tools, enabling consistent allowlisting, audit logging, and access control regardless of whether tools are native or from external MCP servers. This unified security approach is critical because MCP has experienced real production vulnerabilities including remote code execution via prompt injection in official servers and unauthorized email forwarding in community servers. Middleware-level policy enforcement protects against these threats.